- The AI Musicpreneur

- Posts

- ⏳ AI stole his song. He earned NOTHING.

⏳ AI stole his song. He earned NOTHING.

Chart #2 globally. 6 country #1s. Here's how:

Plus: Apple's 33%/0.05% AI gap, OpenPlay's quiet OS for labels, and a $5B vs $11B race.

Issue #1 · Friday, May 8, 2026 · ~9 min read

The Friday read for music industry professionals working at the intersection of AI and the traditional music business.

An AI clone of his song hit #1 in 6 countries. He earned nothing.

Indie reggae artist Stick Figure (Scott Woodruff) released Angels Above Me in 2019. This week, an AI-generated version of it, with a female AI voice over a techno rework, hit #2 on the global Shazam chart and #1 in iTunes charts in Germany, Austria, Belgium, Denmark, Switzerland, and Ireland (UK #2). Tens of thousands of dollars in royalties flowed to anonymous uploaders. He earned nothing until he used his own platform to manually route rights back.

Deezer reports 75,000 AI-generated tracks uploaded daily, 44% of all new uploads, with 85% of streams on AI content flagged as fraudulent.

A January 2026 AI version of Stromae's Papaoutai reached Spotify's Global Chart at #168 with 14 million streams.

The CISAC/PMP study estimates up to €4 billion (25% of creators' revenues) is at risk by 2028.

This week, the enforcement gap stopped being theoretical. Existing fingerprinting catches identical audio. AI derivatives are transformations, so they slip through. Labeling standards (Spotify DDEX, Apple Transparency Tags, TikTok strikes, Deezer demotion, Bandcamp ban) don't help either, because they assume the uploader will disclose.

"Real money taken from him because someone was able to bypass all existing detection and submit to a distributor. Since the AI generated song created a new 'style', typical fingerprinting companies could not detect." Rasha Rahman, founder, HumanStandard, on LinkedIn.

The supply problem is now structural. Apple Music VP Oliver Schusser confirmed this week that AI tracks are roughly 33% of new submissions to Apple Music but under 0.05% of actual listening. The flood is real. The listening isn't (!?). The piece nobody has solved is what happens when one of those 75,000 daily uploads happens to be a transformed copy of a song that already has a fanbase. That's the next two years of the rights conversation.

In today's briefing

Top Story: OpenPlay's Pulse, the AI-native OS quietly being built for labels.

Music Intelligence: APEX paper, the HumanStandard fix, and the catalog-as-API pattern.

High Signal News

[Rights] AI clone of Stick Figure's Angels Above Me hits #2 on global Shazam, #1 in 6 European iTunes charts.

An unauthorized AI cover (female AI vocal, techno rework, identical lyrics and structure) reached #2 on global Shazam, #1 in Germany, Austria, Belgium, Denmark, Switzerland, Ireland, and #2 in the UK. Tens of thousands in royalties flowed to anonymous uploaders. Existing fingerprinting failed because the version is a transformation, not an exact copy.

What this means for you → If you're an artist, register your catalog with a service that detects AI derivatives, not just identical audio. If you're a label or distributor, your current Content ID is a bypass test waiting to be failed.

[DSP] Apple Music VP: AI is 33% of submissions, under 0.05% of listening. Apple has model-level detection.

Oliver Schusser disclosed the cleanest data point yet on the AI flood: roughly one-third of new submissions to Apple Music are AI-generated, but actual listening sits under 0.05%. Apple has built in-house tech that identifies not only whether a track is AI-generated but which model produced it. Fraud penalties now range 10% to 50% of earned royalties (up from 5% to 25%). Apple now requires labels and distributors to disclose AI use.

Barrett Media's David Hill compiled the side-by-side: Spotify uses DDEX (voluntary, since September 2025). Apple Music shipped Transparency Tags in March 2026. TikTok issues immediate strikes for unlabeled AI content. Deezer tags AI and removes it from algorithmic recommendations. Bandcamp banned it outright. Deezer's own research found 80% of listeners want fully AI-generated music labeled, and 52% say it doesn't belong in main charts.

[M&A] Suno targets $5B as ElevenLabs hits $11B. Capital is splitting between scale and licensing.

Suno is raising at a reported $5B valuation (more than 2x its November 2025 round) on $300M ARR, 2 million paid subscribers, and 7 million songs generated daily. ElevenLabs sits at $11B with $500M ARR (up from $350M in five months) and licensing deals with Merlin, Kobalt, and Believe. BlackRock, Wellington, Jamie Foxx, and Eva Longoria are in.

What this means for you → Two pricing models are forming. Suno is winning on volume and pre-license. ElevenLabs is winning on capital and post-license. Whichever model writes the bigger checks to artists is the one that will set the floor.

Google embedded its Lyria 3 Pro model directly into Believe's artist ecosystem (TuneCore parent, €1.07B revenue in 2025, 2 million artists). Lyria 3 Pro generates tracks up to 3 minutes (vs. 30 seconds before). Google retains no ownership of generated content. Outputs carry SynthID watermarks. Training was licensed via YouTube agreements, sidestepping the Suno-Udio litigation pattern. An ambassador program lets selected artists shape the tool weekly.

Splice's ML R&D team launched Variations, a feature that takes one sample and generates new ones carrying the original's full latent identity (room acoustics, signal chain, effects, and the player's articulation). Available now via the Splice Plugin. The framing matters as much as the feature: ML lead Alejandro Koretzky calls it "semantic sound design," and the catalog now becomes "dynamic, adaptable, liquid."

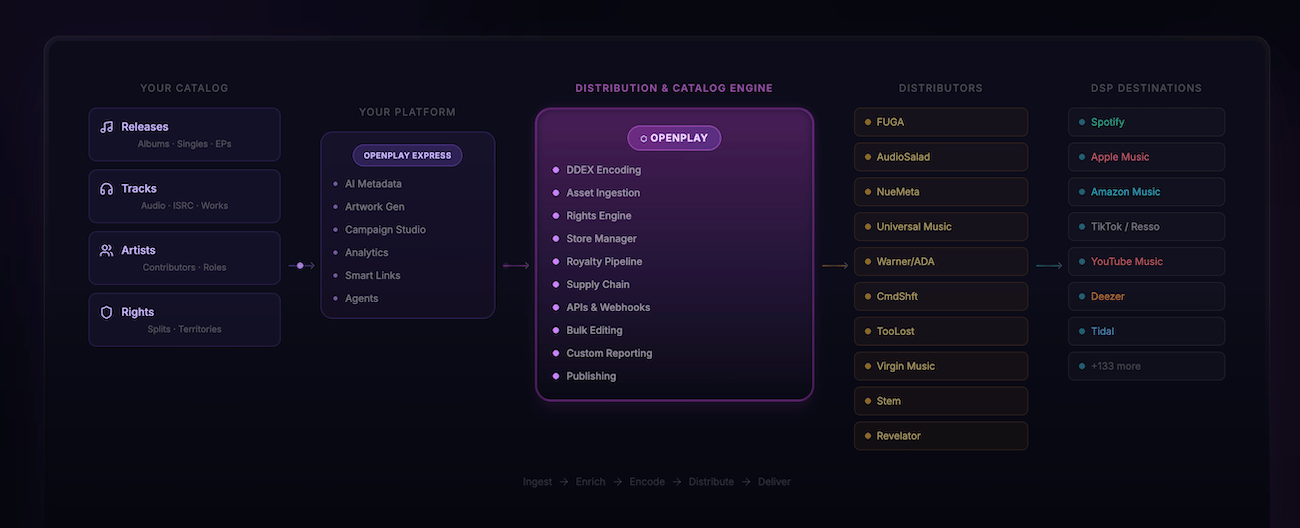

OpenPlay Pulse: the AI operating system labels are quietly being onboarded to

The lede. OpenPlay launched Pulse this week, an AI-native operating system built for labels, distributors, publishers, and rights holders. First public walkthroughs happen at Music Biz 2026 in Atlanta next week (May 11 to 14). OpenPlay says teams are already using it.

Why it matters. OpenPlay is already inside major labels for distribution, metadata, and DDEX workflows. Pulse adds an AI agent layer above that infrastructure. For label ops teams, this is the first AI tool that fits inside the stack they already run, rather than asking them to rip something out and replace it. That's the difference between a product launch and an operating system rollout.

What's in Pulse

Per the launch announcement, Pulse covers catalog operations, marketing workflows, analytics, creative generation, and AI agents trained for music ops. Specific use cases listed: campaign plans, EPKs, playlist pitches, snippets, lyric videos, metadata workflows, audience insights. All "from your catalog context in one system."

5 product pillars (catalog, marketing, analytics, creative, agents)

Built on OpenPlay's enterprise infrastructure

Self-described as "AI-native" rather than retrofitted

The pattern this week

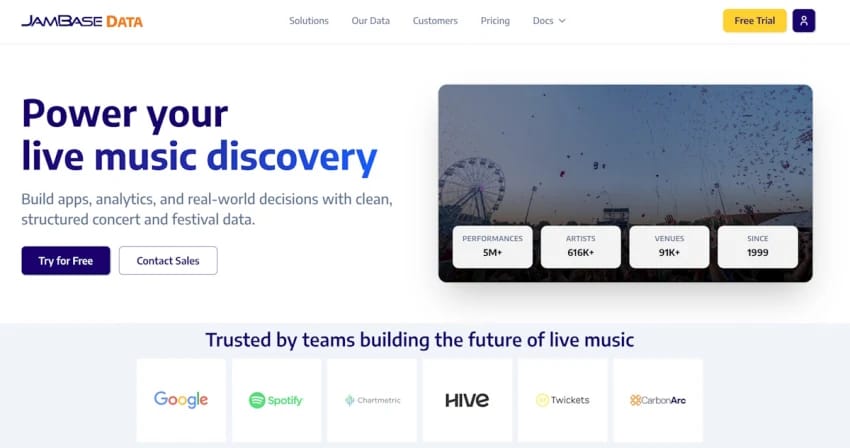

Pulse landed in the same week as Splice Variations (sample DNA into agent-callable variations) and JamBase Data + MCP server (5 million live performances exposed via Model Context Protocol for AI agents). Three independent companies, same week, same move: turn a static music asset into something an AI agent can call.

"Pulse is an AI-native operating system for music and media teams." OpenPlay, in the company's launch post on LinkedIn.

The unanswered question. OpenPlay hasn't disclosed what its AI agents are trained on, who owns the outputs, or how Pulse handles AI disclosure when a label's marketing agent generates assets that touch a copyrighted catalog. The Music Biz walkthroughs next week are the place to ask. The product page is JS-only and tells you nothing.

The takeaway for music industry pros. For label ops, distributor ops, and publishing teams, Pulse is the first AI tooling that sits where the work already happens. For indie creators on Suno or Udio, it's a reminder: labels are not standing still while the lawsuits play out. They're building infrastructure quietly.

Jaavid Aktar Husain and Dorien Herremans at the AMAAI Lab (Singapore University of Technology and Design) trained APEX on 211,000 songs (10,000 hours of audio) from Suno and Udio. The model jointly predicts engagement signals (streams, likes) and 5 perceptual aesthetic dimensions, using frozen audio embeddings from MERT. The single insight worth pulling: aesthetic features generalised across 11 generative systems the model never saw during training, on the Music Arena dataset. Aesthetics and popularity are complementary, not redundant.

DISCUSSION OF THE WEEK: Rasha Rahman on why fingerprinting fails for AI derivatives.

HumanStandard founder Rasha Rahman, on the Stick Figure case: "Real money taken from him because someone was able to bypass all existing detection and submit to a distributor. Since the AI generated song created a new 'style', typical fingerprinting companies could not detect." His policy answer in one sentence: AI-generated music can't hold its own copyright, so any revenue from an AI derivative should route to the original rights holder. HumanStandard is building the AI-native fingerprinting and registry to do it.

IDEA WORTH SITTING WITH: Catalog as API

Splice Variations turns a sample into agent-callable DNA. JamBase Data + MCP exposes 5 million live performances, 600,000 artists, 90,000 venues, and 20,000 festivals to AI agents. OpenPlay Pulse turns a label's catalog into agent-callable workflows. Three independent companies shipped this pattern in the same week. The next 18 months of music tooling will be built on top of these rails, not on top of generation models.

Stick Figure got his rights routed back because he had a fanbase he could mobilize. That's leverage. Most artists don't have it.

The same week, Suno raised at $5B on volume (7 million songs a day), and ElevenLabs raised at $11B on licensing (€11M paid to creators, deals with Merlin, Kobalt, Believe). Capital is making a bet on both sides. Volume on one. Taste on the other. Both scale faster than any working artist can.

The thing AI can't generate is the part that makes someone open the email. Trust. A relationship. A reason to care. That's leverage. That's also the only durable asset when 75,000 AI tracks land on Deezer every day.

I think the play is the same as it was when Myspace folded. Build leverage on something you own. The audience. The story. The taste. AI is a tool. Your judgment is the skill. The next two years will sort the people who get that from the people who don't.

Open the JamBase MCP server in your AI agent of choice.

JamBase opened its full data platform this week. 5 million live performances, 600,000 artists, 90,000 venues, all exposed through an API and a Model Context Protocol server that plugs into Claude, ChatGPT, or whatever you use. Spend 20 minutes asking your agent to map your city's live calendar against an artist you'd want to support. Where are they playing in the next 90 days? Which venues are on their route? What does their tour history look like? You'll get a sharper sense of how the catalog-as-API pattern actually feels in your hands than any product demo can show you.

Forward this to a colleague:

If this issue gave you signal you didn't have an hour ago, the single best thing you can do is forward it to one person in your network who should be reading it: a colleague at your label, a manager you respect, a founder building in this space.

That's how this briefing grows. One trusted forward at a time.

→ Forward The AI Music Briefing

→ Or share the link

About The AI Music Briefing

The AI Music Briefing is a weekly Friday read for music industry professionals working at the intersection of AI and the traditional music business. Curated and written by Christopher Wieduwilt, founder of The AI Musicpreneur.

Got a tip, a story, or a partnership idea? Reply to this email. Every message lands directly in my inbox.

Always rooting for you,

Chris

How was this week's email? |